Update

This commit is contained in:

parent

e9730a8612

commit

60e97925aa

183

Reference/Segment_Anything.md

Normal file

183

Reference/Segment_Anything.md

Normal file

@ -0,0 +1,183 @@

|

||||

## Latest updates -- SAM 2: Segment Anything in Images and Videos

|

||||

|

||||

Please check out our new release on [**Segment Anything Model 2 (SAM 2)**](https://github.com/facebookresearch/segment-anything-2).

|

||||

|

||||

* SAM 2 code: https://github.com/facebookresearch/segment-anything-2

|

||||

* SAM 2 demo: https://sam2.metademolab.com/

|

||||

* SAM 2 paper: https://arxiv.org/abs/2408.00714

|

||||

|

||||

|

||||

|

||||

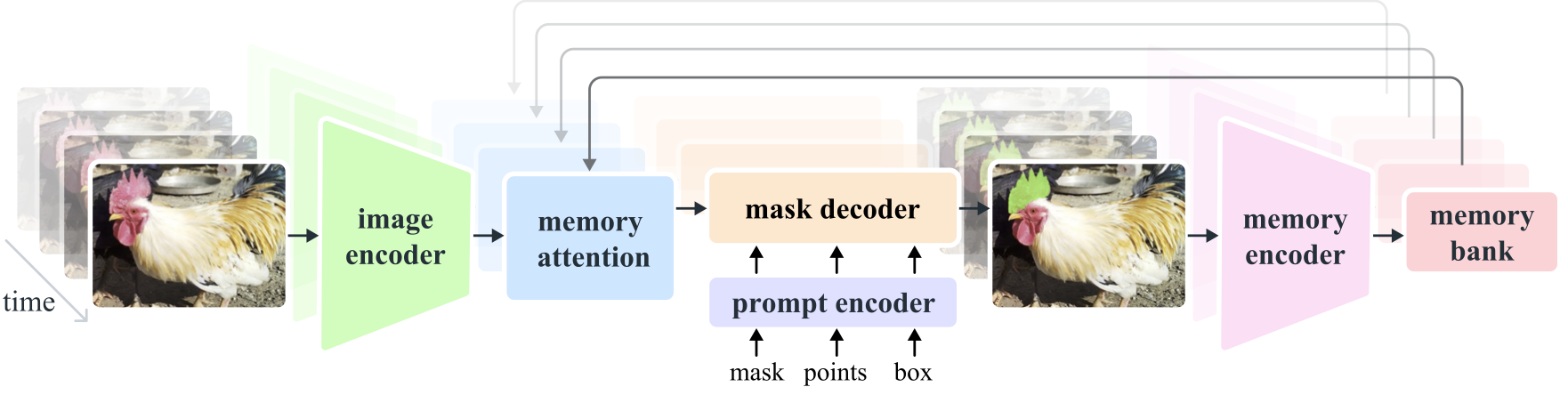

**Segment Anything Model 2 (SAM 2)** is a foundation model towards solving promptable visual segmentation in images and videos. We extend SAM to video by considering images as a video with a single frame. The model design is a simple transformer architecture with streaming memory for real-time video processing. We build a model-in-the-loop data engine, which improves model and data via user interaction, to collect [**our SA-V dataset**](https://ai.meta.com/datasets/segment-anything-video), the largest video segmentation dataset to date. SAM 2 trained on our data provides strong performance across a wide range of tasks and visual domains.

|

||||

|

||||

# Segment Anything

|

||||

|

||||

**[Meta AI Research, FAIR](https://ai.facebook.com/research/)**

|

||||

|

||||

[Alexander Kirillov](https://alexander-kirillov.github.io/), [Eric Mintun](https://ericmintun.github.io/), [Nikhila Ravi](https://nikhilaravi.com/), [Hanzi Mao](https://hanzimao.me/), Chloe Rolland, Laura Gustafson, [Tete Xiao](https://tetexiao.com), [Spencer Whitehead](https://www.spencerwhitehead.com/), Alex Berg, Wan-Yen Lo, [Piotr Dollar](https://pdollar.github.io/), [Ross Girshick](https://www.rossgirshick.info/)

|

||||

|

||||

[[`Paper`](https://ai.facebook.com/research/publications/segment-anything/)] [[`Project`](https://segment-anything.com/)] [[`Demo`](https://segment-anything.com/demo)] [[`Dataset`](https://segment-anything.com/dataset/index.html)] [[`Blog`](https://ai.facebook.com/blog/segment-anything-foundation-model-image-segmentation/)] [[`BibTeX`](#citing-segment-anything)]

|

||||

|

||||

|

||||

|

||||

The **Segment Anything Model (SAM)** produces high quality object masks from input prompts such as points or boxes, and it can be used to generate masks for all objects in an image. It has been trained on a [dataset](https://segment-anything.com/dataset/index.html) of 11 million images and 1.1 billion masks, and has strong zero-shot performance on a variety of segmentation tasks.

|

||||

|

||||

<p float="left">

|

||||

<img src="assets/masks1.png?raw=true" width="37.25%" />

|

||||

<img src="assets/masks2.jpg?raw=true" width="61.5%" />

|

||||

</p>

|

||||

|

||||

## Installation

|

||||

|

||||

The code requires `python>=3.8`, as well as `pytorch>=1.7` and `torchvision>=0.8`. Please follow the instructions [here](https://pytorch.org/get-started/locally/) to install both PyTorch and TorchVision dependencies. Installing both PyTorch and TorchVision with CUDA support is strongly recommended.

|

||||

|

||||

Install Segment Anything:

|

||||

|

||||

```

|

||||

pip install git+https://github.com/facebookresearch/segment-anything.git

|

||||

```

|

||||

|

||||

or clone the repository locally and install with

|

||||

|

||||

```

|

||||

git clone git@github.com:facebookresearch/segment-anything.git

|

||||

cd segment-anything; pip install -e .

|

||||

```

|

||||

|

||||

The following optional dependencies are necessary for mask post-processing, saving masks in COCO format, the example notebooks, and exporting the model in ONNX format. `jupyter` is also required to run the example notebooks.

|

||||

|

||||

```

|

||||

pip install opencv-python pycocotools matplotlib onnxruntime onnx

|

||||

```

|

||||

|

||||

## <a name="GettingStarted"></a>Getting Started

|

||||

|

||||

First download a [model checkpoint](#model-checkpoints). Then the model can be used in just a few lines to get masks from a given prompt:

|

||||

|

||||

```

|

||||

from segment_anything import SamPredictor, sam_model_registry

|

||||

sam = sam_model_registry["<model_type>"](checkpoint="<path/to/checkpoint>")

|

||||

predictor = SamPredictor(sam)

|

||||

predictor.set_image(<your_image>)

|

||||

masks, _, _ = predictor.predict(<input_prompts>)

|

||||

```

|

||||

|

||||

or generate masks for an entire image:

|

||||

|

||||

```

|

||||

from segment_anything import SamAutomaticMaskGenerator, sam_model_registry

|

||||

sam = sam_model_registry["<model_type>"](checkpoint="<path/to/checkpoint>")

|

||||

mask_generator = SamAutomaticMaskGenerator(sam)

|

||||

masks = mask_generator.generate(<your_image>)

|

||||

```

|

||||

|

||||

Additionally, masks can be generated for images from the command line:

|

||||

|

||||

```

|

||||

python scripts/amg.py --checkpoint <path/to/checkpoint> --model-type <model_type> --input <image_or_folder> --output <path/to/output>

|

||||

```

|

||||

|

||||

See the examples notebooks on [using SAM with prompts](/notebooks/predictor_example.ipynb) and [automatically generating masks](/notebooks/automatic_mask_generator_example.ipynb) for more details.

|

||||

|

||||

<p float="left">

|

||||

<img src="assets/notebook1.png?raw=true" width="49.1%" />

|

||||

<img src="assets/notebook2.png?raw=true" width="48.9%" />

|

||||

</p>

|

||||

|

||||

## ONNX Export

|

||||

|

||||

SAM's lightweight mask decoder can be exported to ONNX format so that it can be run in any environment that supports ONNX runtime, such as in-browser as showcased in the [demo](https://segment-anything.com/demo). Export the model with

|

||||

|

||||

```

|

||||

python scripts/export_onnx_model.py --checkpoint <path/to/checkpoint> --model-type <model_type> --output <path/to/output>

|

||||

```

|

||||

|

||||

See the [example notebook](https://github.com/facebookresearch/segment-anything/blob/main/notebooks/onnx_model_example.ipynb) for details on how to combine image preprocessing via SAM's backbone with mask prediction using the ONNX model. It is recommended to use the latest stable version of PyTorch for ONNX export.

|

||||

|

||||

### Web demo

|

||||

|

||||

The `demo/` folder has a simple one page React app which shows how to run mask prediction with the exported ONNX model in a web browser with multithreading. Please see [`demo/README.md`](https://github.com/facebookresearch/segment-anything/blob/main/demo/README.md) for more details.

|

||||

|

||||

## <a name="Models"></a>Model Checkpoints

|

||||

|

||||

Three model versions of the model are available with different backbone sizes. These models can be instantiated by running

|

||||

|

||||

```

|

||||

from segment_anything import sam_model_registry

|

||||

sam = sam_model_registry["<model_type>"](checkpoint="<path/to/checkpoint>")

|

||||

```

|

||||

|

||||

Click the links below to download the checkpoint for the corresponding model type.

|

||||

|

||||

- **`default` or `vit_h`: [ViT-H SAM model.](https://dl.fbaipublicfiles.com/segment_anything/sam_vit_h_4b8939.pth)**

|

||||

- `vit_l`: [ViT-L SAM model.](https://dl.fbaipublicfiles.com/segment_anything/sam_vit_l_0b3195.pth)

|

||||

- `vit_b`: [ViT-B SAM model.](https://dl.fbaipublicfiles.com/segment_anything/sam_vit_b_01ec64.pth)

|

||||

|

||||

## Dataset

|

||||

|

||||

See [here](https://ai.facebook.com/datasets/segment-anything/) for an overview of the datastet. The dataset can be downloaded [here](https://ai.facebook.com/datasets/segment-anything-downloads/). By downloading the datasets you agree that you have read and accepted the terms of the SA-1B Dataset Research License.

|

||||

|

||||

We save masks per image as a json file. It can be loaded as a dictionary in python in the below format.

|

||||

|

||||

```python

|

||||

{

|

||||

"image" : image_info,

|

||||

"annotations" : [annotation],

|

||||

}

|

||||

|

||||

image_info {

|

||||

"image_id" : int, # Image id

|

||||

"width" : int, # Image width

|

||||

"height" : int, # Image height

|

||||

"file_name" : str, # Image filename

|

||||

}

|

||||

|

||||

annotation {

|

||||

"id" : int, # Annotation id

|

||||

"segmentation" : dict, # Mask saved in COCO RLE format.

|

||||

"bbox" : [x, y, w, h], # The box around the mask, in XYWH format

|

||||

"area" : int, # The area in pixels of the mask

|

||||

"predicted_iou" : float, # The model's own prediction of the mask's quality

|

||||

"stability_score" : float, # A measure of the mask's quality

|

||||

"crop_box" : [x, y, w, h], # The crop of the image used to generate the mask, in XYWH format

|

||||

"point_coords" : [[x, y]], # The point coordinates input to the model to generate the mask

|

||||

}

|

||||

```

|

||||

|

||||

Image ids can be found in sa_images_ids.txt which can be downloaded using the above [link](https://ai.facebook.com/datasets/segment-anything-downloads/) as well.

|

||||

|

||||

To decode a mask in COCO RLE format into binary:

|

||||

|

||||

```

|

||||

from pycocotools import mask as mask_utils

|

||||

mask = mask_utils.decode(annotation["segmentation"])

|

||||

```

|

||||

|

||||

See [here](https://github.com/cocodataset/cocoapi/blob/master/PythonAPI/pycocotools/mask.py) for more instructions to manipulate masks stored in RLE format.

|

||||

|

||||

## License

|

||||

|

||||

The model is licensed under the [Apache 2.0 license](LICENSE).

|

||||

|

||||

## Contributing

|

||||

|

||||

See [contributing](CONTRIBUTING.md) and the [code of conduct](CODE_OF_CONDUCT.md).

|

||||

|

||||

## Contributors

|

||||

|

||||

The Segment Anything project was made possible with the help of many contributors (alphabetical):

|

||||

|

||||

Aaron Adcock, Vaibhav Aggarwal, Morteza Behrooz, Cheng-Yang Fu, Ashley Gabriel, Ahuva Goldstand, Allen Goodman, Sumanth Gurram, Jiabo Hu, Somya Jain, Devansh Kukreja, Robert Kuo, Joshua Lane, Yanghao Li, Lilian Luong, Jitendra Malik, Mallika Malhotra, William Ngan, Omkar Parkhi, Nikhil Raina, Dirk Rowe, Neil Sejoor, Vanessa Stark, Bala Varadarajan, Bram Wasti, Zachary Winstrom

|

||||

|

||||

## Citing Segment Anything

|

||||

|

||||

If you use SAM or SA-1B in your research, please use the following BibTeX entry.

|

||||

|

||||

```

|

||||

@article{kirillov2023segany,

|

||||

title={Segment Anything},

|

||||

author={Kirillov, Alexander and Mintun, Eric and Ravi, Nikhila and Mao, Hanzi and Rolland, Chloe and Gustafson, Laura and Xiao, Tete and Whitehead, Spencer and Berg, Alexander C. and Lo, Wan-Yen and Doll{\'a}r, Piotr and Girshick, Ross},

|

||||

journal={arXiv:2304.02643},

|

||||

year={2023}

|

||||

}

|

||||

```

|

||||

68

Reference/detectron2.md

Normal file

68

Reference/detectron2.md

Normal file

@ -0,0 +1,68 @@

|

||||

<img src=".github/Detectron2-Logo-Horz.svg" width="300" >

|

||||

|

||||

<a href="https://opensource.facebook.com/support-ukraine">

|

||||

<img src="https://img.shields.io/badge/Support-Ukraine-FFD500?style=flat&labelColor=005BBB" alt="Support Ukraine - Help Provide Humanitarian Aid to Ukraine." />

|

||||

</a>

|

||||

|

||||

Detectron2 is Facebook AI Research's next generation library

|

||||

that provides state-of-the-art detection and segmentation algorithms.

|

||||

It is the successor of

|

||||

[Detectron](https://github.com/facebookresearch/Detectron/)

|

||||

and [maskrcnn-benchmark](https://github.com/facebookresearch/maskrcnn-benchmark/).

|

||||

It supports a number of computer vision research projects and production applications in Facebook.

|

||||

|

||||

<div align="center">

|

||||

<img src="https://user-images.githubusercontent.com/1381301/66535560-d3422200-eace-11e9-9123-5535d469db19.png"/>

|

||||

</div>

|

||||

<br>

|

||||

|

||||

## Learn More about Detectron2

|

||||

|

||||

Explain Like I’m 5: Detectron2 | Using Machine Learning with Detectron2

|

||||

:-------------------------:|:-------------------------:

|

||||

[](https://www.youtube.com/watch?v=1oq1Ye7dFqc) | [](https://www.youtube.com/watch?v=eUSgtfK4ivk)

|

||||

|

||||

## What's New

|

||||

* Includes new capabilities such as panoptic segmentation, Densepose, Cascade R-CNN, rotated bounding boxes, PointRend,

|

||||

DeepLab, ViTDet, MViTv2 etc.

|

||||

* Used as a library to support building [research projects](projects/) on top of it.

|

||||

* Models can be exported to TorchScript format or Caffe2 format for deployment.

|

||||

* It [trains much faster](https://detectron2.readthedocs.io/notes/benchmarks.html).

|

||||

|

||||

See our [blog post](https://ai.facebook.com/blog/-detectron2-a-pytorch-based-modular-object-detection-library-/)

|

||||

to see more demos and learn about detectron2.

|

||||

|

||||

## Installation

|

||||

|

||||

See [installation instructions](https://detectron2.readthedocs.io/tutorials/install.html).

|

||||

|

||||

## Getting Started

|

||||

|

||||

See [Getting Started with Detectron2](https://detectron2.readthedocs.io/tutorials/getting_started.html),

|

||||

and the [Colab Notebook](https://colab.research.google.com/drive/16jcaJoc6bCFAQ96jDe2HwtXj7BMD_-m5)

|

||||

to learn about basic usage.

|

||||

|

||||

Learn more at our [documentation](https://detectron2.readthedocs.org).

|

||||

And see [projects/](projects/) for some projects that are built on top of detectron2.

|

||||

|

||||

## Model Zoo and Baselines

|

||||

|

||||

We provide a large set of baseline results and trained models available for download in the [Detectron2 Model Zoo](MODEL_ZOO.md).

|

||||

|

||||

## License

|

||||

|

||||

Detectron2 is released under the [Apache 2.0 license](LICENSE).

|

||||

|

||||

## Citing Detectron2

|

||||

|

||||

If you use Detectron2 in your research or wish to refer to the baseline results published in the [Model Zoo](MODEL_ZOO.md), please use the following BibTeX entry.

|

||||

|

||||

```BibTeX

|

||||

@misc{wu2019detectron2,

|

||||

author = {Yuxin Wu and Alexander Kirillov and Francisco Massa and

|

||||

Wan-Yen Lo and Ross Girshick},

|

||||

title = {Detectron2},

|

||||

howpublished = {\url{https://github.com/facebookresearch/detectron2}},

|

||||

year = {2019}

|

||||

}

|

||||

```

|

||||

@ -1,4 +1,6 @@

|

||||

@echo off

|

||||

SET MICROMAMBA_EXE=%~dp0micromamba.exe

|

||||

SET MAMBA_ROOT_PREFIX=%~dp0micromamba

|

||||

SET CUDA_HOME="C:\Program Files\NVIDIA GPU Computing Toolkit\CUDA\v11.8"

|

||||

SET PATH=%CUDA_HOME%\bin;%PATH%

|

||||

SET PROJECT_DIR=%~dp0

|

||||

@ -10,4 +12,4 @@ SET BLENDER_DIR="C:\Program Files\Blender Foundation\Blender 3.6"

|

||||

SET VS2019_DIR="C:\Program Files (x86)\Microsoft Visual Studio\2019\BuildTools"

|

||||

SET VS2019_VCVARS="%VS2019_DIR%\VC\Auxiliary\Build\vcvars64.bat"

|

||||

|

||||

CALL micromamba activate gaussian_splatting_hair

|

||||

CALL "%MICROMAMBA_EXE%" activate -p %MAMBA_ROOT_PREFIX%\envs\gaussian_splatting_hair

|

||||

|

||||

@ -1,4 +1,6 @@

|

||||

@echo off

|

||||

SET MICROMAMBA_EXE=%~dp0micromamba.exe

|

||||

SET MAMBA_ROOT_PREFIX=%~dp0micromamba

|

||||

SET CUDA_HOME="C:\Program Files\NVIDIA GPU Computing Toolkit\CUDA\v11.8"

|

||||

SET PATH=%CUDA_HOME%\bin;%PATH%

|

||||

SET PROJECT_DIR=%~dp0

|

||||

@ -9,5 +11,8 @@ SET GDOWN_CACHE=%PROJECT_DIR%\cache\gdown

|

||||

SET BLENDER_DIR="C:\Program Files\Blender Foundation\Blender 3.6"

|

||||

SET VS2019_DIR="C:\Program Files (x86)\Microsoft Visual Studio\2019\BuildTools"

|

||||

SET VS2019_VCVARS="%VS2019_DIR%\VC\Auxiliary\Build\vcvars64.bat"

|

||||

SET DISTUTILS_USE_SDK=1

|

||||

SET MSSdk=1

|

||||

|

||||

CALL micromamba activate matte_anything

|

||||

CALL "%MICROMAMBA_EXE%" activate -p %MAMBA_ROOT_PREFIX%\envs\matte_anything

|

||||

IF EXIST %VS2019_VCVARS% CALL %VS2019_VCVARS%

|

||||

|

||||

@ -1,4 +1,6 @@

|

||||

@echo off

|

||||

SET MICROMAMBA_EXE=%~dp0micromamba.exe

|

||||

SET MAMBA_ROOT_PREFIX=%~dp0micromamba

|

||||

SET CUDA_HOME="C:\Program Files\NVIDIA GPU Computing Toolkit\CUDA\v11.8"

|

||||

SET PATH=%CUDA_HOME%\bin;%PATH%

|

||||

SET PROJECT_DIR=%~dp0

|

||||

@ -10,4 +12,4 @@ SET BLENDER_DIR="C:\Program Files\Blender Foundation\Blender 3.6"

|

||||

SET VS2019_DIR="C:\Program Files (x86)\Microsoft Visual Studio\2019\BuildTools"

|

||||

SET VS2019_VCVARS="%VS2019_DIR%\VC\Auxiliary\Build\vcvars64.bat"

|

||||

|

||||

CALL micromamba activate openpose

|

||||

CALL "%MICROMAMBA_EXE%" activate -p %MAMBA_ROOT_PREFIX%\envs\openpose

|

||||

|

||||

@ -1,4 +1,6 @@

|

||||

@echo off

|

||||

SET MICROMAMBA_EXE=%~dp0micromamba.exe

|

||||

SET MAMBA_ROOT_PREFIX=%~dp0micromamba

|

||||

SET CUDA_HOME="C:\Program Files\NVIDIA GPU Computing Toolkit\CUDA\v11.8"

|

||||

SET PATH=%CUDA_HOME%\bin;%PATH%

|

||||

SET PROJECT_DIR=%~dp0

|

||||

@ -10,4 +12,4 @@ SET BLENDER_DIR="C:\Program Files\Blender Foundation\Blender 3.6"

|

||||

SET VS2019_DIR="C:\Program Files (x86)\Microsoft Visual Studio\2019\BuildTools"

|

||||

SET VS2019_VCVARS="%VS2019_DIR%\VC\Auxiliary\Build\vcvars64.bat"

|

||||

|

||||

CALL micromamba activate pixie-env

|

||||

CALL "%MICROMAMBA_EXE%" activate -p %MAMBA_ROOT_PREFIX%\envs\pixie-env

|

||||

|

||||

64

install.bat

64

install.bat

@ -2,17 +2,32 @@

|

||||

setlocal enabledelayedexpansion

|

||||

|

||||

REM 设置环境变量

|

||||

SET CUDA_HOME=C:\Program Files\NVIDIA GPU Computing Toolkit\CUDA\v11.8

|

||||

SET MICROMAMBA_EXE=%~dp0micromamba.exe

|

||||

SET CUDA_HOME="C:\Program Files\NVIDIA GPU Computing Toolkit\CUDA\v11.8"

|

||||

SET PATH=%CUDA_HOME%\bin;%PATH%

|

||||

SET PROJECT_DIR=%~dp0

|

||||

SET PYTHONDONTWRITEBYTECODE=1

|

||||

SET GDOWN_CACHE=cache\gdown

|

||||

SET TORCH_HOME=cache\torch

|

||||

SET HF_HOME=cache\huggingface

|

||||

SET BLENDER_DIR=C:\Program Files\Blender Foundation\Blender 3.6

|

||||

SET VS2019_DIR=C:\Program Files (x86)\Microsoft Visual Studio\2019\BuildTools

|

||||

SET BLENDER_DIR="C:\Program Files\Blender Foundation\Blender 3.6"

|

||||

SET VS2019_DIR="C:\Program Files (x86)\Microsoft Visual Studio\2019\BuildTools"

|

||||

SET VS2019_VCVARS=%VS2019_DIR%\VC\Auxiliary\Build\vcvars64.bat

|

||||

|

||||

REM 检查micromamba

|

||||

IF NOT EXIST "%MICROMAMBA_EXE%" (

|

||||

echo ERROR: micromamba not found at %MICROMAMBA_EXE%

|

||||

echo Please install micromamba from https://mamba.readthedocs.io/en/latest/installation.html

|

||||

exit /b 1

|

||||

)

|

||||

|

||||

REM 设置micromamba根目录

|

||||

SET MAMBA_ROOT_PREFIX=%PROJECT_DIR%\micromamba

|

||||

mkdir %MAMBA_ROOT_PREFIX%

|

||||

|

||||

REM 初始化micromamba

|

||||

CALL "%MICROMAMBA_EXE%" shell init --root-prefix=%MAMBA_ROOT_PREFIX%

|

||||

|

||||

REM 检查必要的环境和依赖

|

||||

IF NOT EXIST "%CUDA_HOME%" (

|

||||

echo ERROR: CUDA 11.8 not found at %CUDA_HOME%

|

||||

@ -31,6 +46,13 @@ IF NOT EXIST "%VS2019_VCVARS%" (

|

||||

exit /b 1

|

||||

)

|

||||

|

||||

REM 检查CUDA版本

|

||||

nvcc --version | findstr "release 11.8" >nul

|

||||

IF %ERRORLEVEL% NEQ 0 (

|

||||

echo ERROR: CUDA 11.8 not found or version mismatch

|

||||

exit /b 1

|

||||

)

|

||||

|

||||

REM 检查COLMAP

|

||||

where colmap >nul 2>nul

|

||||

IF %ERRORLEVEL% NEQ 0 (

|

||||

@ -40,16 +62,16 @@ IF %ERRORLEVEL% NEQ 0 (

|

||||

)

|

||||

|

||||

REM 创建缓存目录

|

||||

mkdir cache\gdown

|

||||

mkdir cache\torch

|

||||

mkdir cache\huggingface

|

||||

mkdir cache\gdown 2>nul

|

||||

mkdir cache\torch 2>nul

|

||||

mkdir cache\huggingface 2>nul

|

||||

|

||||

REM 创建ext目录

|

||||

mkdir ext

|

||||

mkdir ext 2>nul

|

||||

cd ext

|

||||

|

||||

REM 克隆外部库

|

||||

git clone https://github.com/CMU-Perceptual-Computing-Lab/openpose --depth 1

|

||||

git clone --depth 1 https://github.com/CMU-Perceptual-Computing-Lab/openpose

|

||||

cd openpose

|

||||

git submodule update --init --recursive --remote

|

||||

cd ..

|

||||

@ -80,13 +102,13 @@ cd ..

|

||||

git clone https://github.com/SSL92/hyperIQA

|

||||

|

||||

REM 创建环境

|

||||

CALL micromamba create -n gaussian_splatting_hair python=3.8 pytorch=2.0.0 torchvision pytorch-cuda=11.8 cmake ninja -c pytorch -c nvidia -c conda-forge -y

|

||||

CALL micromamba create -n matte_anything pytorch=2.0.0 pytorch-cuda=11.8 torchvision tensorboard timm=0.5.4 opencv=4.5.3 mkl=2024.0 setuptools=58.2.0 easydict wget scikit-image gradio=3.46.1 fairscale -c pytorch -c nvidia -c conda-forge -y

|

||||

CALL micromamba create -n openpose python=3.8 cmake=3.20 -c conda-forge -y

|

||||

CALL micromamba create -n pixie-env python=3.8 pytorch=2.0.0 torchvision pytorch-cuda=11.8 fvcore pytorch3d==0.7.5 kornia matplotlib -c pytorch -c nvidia -c fvcore -c conda-forge -c pytorch3d -y

|

||||

CALL "%MICROMAMBA_EXE%" create -p %MAMBA_ROOT_PREFIX%\envs\gaussian_splatting_hair python=3.8 pytorch=2.0.0 torchvision pytorch-cuda=11.8 cmake ninja setuptools=58.2.0 -c pytorch -c nvidia -c conda-forge -y

|

||||

CALL "%MICROMAMBA_EXE%" create -p %MAMBA_ROOT_PREFIX%\envs\matte_anything pytorch=2.0.0 pytorch-cuda=11.8 torchvision tensorboard timm=0.5.4 opencv=4.5.3 mkl=2024.0 setuptools=58.2.0 easydict wget scikit-image gradio=3.46.1 fairscale -c pytorch -c nvidia -c conda-forge -y

|

||||

CALL "%MICROMAMBA_EXE%" create -p %MAMBA_ROOT_PREFIX%\envs\openpose python=3.8 cmake=3.20 -c conda-forge -y

|

||||

CALL "%MICROMAMBA_EXE%" create -p %MAMBA_ROOT_PREFIX%\envs\pixie-env python=3.8 pytorch=2.0.0 torchvision pytorch-cuda=11.8 fvcore pytorch3d==0.7.5 kornia matplotlib -c pytorch -c nvidia -c fvcore -c conda-forge -c pytorch3d -c bottler -c iopath -y

|

||||

|

||||

REM 安装 gaussian_splatting_hair 环境

|

||||

CALL activate_gaussian_splatting_hair.bat

|

||||

CALL "%MICROMAMBA_EXE%" activate -p %MAMBA_ROOT_PREFIX%\envs\gaussian_splatting_hair

|

||||

pip install -r requirements.txt

|

||||

cd %PROJECT_DIR%\ext\pytorch3d

|

||||

pip install -e .

|

||||

@ -113,8 +135,22 @@ del pixie_data.tar.gz

|

||||

REM 安装 Matte-Anything 环境

|

||||

CALL activate_matte_anything.bat

|

||||

cd %PROJECT_DIR%\ext\Matte-Anything

|

||||

|

||||

REM 修改pytorch文件以支持Windows

|

||||

IF EXIST "%CONDA_PREFIX%\Lib\site-packages\torch\include\torch\csrc\jit\runtime\argument_spec.h" (

|

||||

echo Patching argument_spec.h...

|

||||

powershell -Command "(gc '%CONDA_PREFIX%\Lib\site-packages\torch\include\torch\csrc\jit\runtime\argument_spec.h') -replace 'static constexpr size_t DEPTH_LIMIT = 128;', 'static const size_t DEPTH_LIMIT = 128;' | Out-File -encoding ASCII '%CONDA_PREFIX%\Lib\site-packages\torch\include\torch\csrc\jit\runtime\argument_spec.h'"

|

||||

)

|

||||

IF EXIST "%CONDA_PREFIX%\Lib\site-packages\torch\include\pybind11\cast.h" (

|

||||

echo Patching cast.h...

|

||||

powershell -Command "(gc '%CONDA_PREFIX%\Lib\site-packages\torch\include\pybind11\cast.h') -replace 'explicit operator type&\(\) { return \*\(this->value\); }', 'explicit operator type&() { return *((type*)this->value); }' | Out-File -encoding ASCII '%CONDA_PREFIX%\Lib\site-packages\torch\include\pybind11\cast.h'"

|

||||

)

|

||||

|

||||

REM 安装SAM

|

||||

pip install git+https://github.com/facebookresearch/segment-anything.git

|

||||

pip install 'git+https://github.com/facebookresearch/detectron2.git'

|

||||

|

||||

REM 安装detectron2

|

||||

pip install 'git+https://github.com/facebookresearch/detectron2.git@v0.6'

|

||||

pip install -e GroundingDINO

|

||||

pip install supervision==0.22.0

|

||||

mkdir pretrained

|

||||

|

||||

@ -1,5 +1,5 @@

|

||||

numpy>=1.21.0

|

||||

scipy>=1.7.0

|

||||

numpy>=1.21.0,<2.0.0

|

||||

scipy>=1.7.0,<2.0.0

|

||||

pillow>=8.3.1

|

||||

tqdm>=4.62.2

|

||||

matplotlib>=3.4.2

|

||||

@ -32,4 +32,5 @@ clean-fid>=0.1.35

|

||||

clip>=0.2.0

|

||||

torchdiffeq>=0.2.3

|

||||

torchsde>=0.2.5

|

||||

resize-right>=0.0.2

|

||||

resize-right>=0.0.2

|

||||

colmap>=3.10

|

||||

170

run.bat

170

run.bat

@ -2,6 +2,8 @@

|

||||

setlocal enabledelayedexpansion

|

||||

|

||||

REM 设置环境变量

|

||||

SET MICROMAMBA_EXE=%~dp0micromamba.exe

|

||||

SET MAMBA_ROOT_PREFIX=%~dp0micromamba

|

||||

SET GPU=0

|

||||

SET CAMERA=PINHOLE

|

||||

SET EXP_NAME_1=stage1

|

||||

@ -26,86 +28,146 @@ IF NOT EXIST "%BLENDER_DIR%" (

|

||||

echo ERROR: BLENDER_DIR path does not exist: %BLENDER_DIR%

|

||||

exit /b 1

|

||||

)

|

||||

IF NOT EXIST "%MICROMAMBA_EXE%" (

|

||||

echo ERROR: micromamba not found at %MICROMAMBA_EXE%

|

||||

echo Please install micromamba from https://mamba.readthedocs.io/en/latest/installation.html

|

||||

exit /b 1

|

||||

)

|

||||

|

||||

REM ##################

|

||||

REM # 预处理阶段 #

|

||||

REM ##################

|

||||

|

||||

REM 将原始图像整理成3D Gaussian Splatting格式

|

||||

CALL activate_gaussian_splatting_hair.bat

|

||||

CALL "%MICROMAMBA_EXE%" activate -p %MAMBA_ROOT_PREFIX%\envs\gaussian_splatting_hair

|

||||

cd %PROJECT_DIR%\src\preprocessing

|

||||

set CUDA_VISIBLE_DEVICES=%GPU%

|

||||

python preprocess_raw_images.py --data_path %DATA_PATH%

|

||||

IF %ERRORLEVEL% NEQ 0 (

|

||||

echo ERROR: Failed to run command

|

||||

exit /b 1

|

||||

)

|

||||

|

||||

REM 运行COLMAP重建并去畸变图像和相机

|

||||

CALL activate_gaussian_splatting_hair.bat

|

||||

CALL "%MICROMAMBA_EXE%" activate -p %MAMBA_ROOT_PREFIX%\envs\gaussian_splatting_hair

|

||||

cd %PROJECT_DIR%\src

|

||||

set CUDA_VISIBLE_DEVICES=%GPU%

|

||||

python convert.py -s %DATA_PATH% --camera %CAMERA% --max_size 1024

|

||||

IF %ERRORLEVEL% NEQ 0 (

|

||||

echo ERROR: Failed to run command

|

||||

exit /b 1

|

||||

)

|

||||

|

||||

REM 运行Matte-Anything

|

||||

CALL activate_matte_anything.bat

|

||||

CALL "%MICROMAMBA_EXE%" activate -p %MAMBA_ROOT_PREFIX%\envs\matte_anything

|

||||

cd %PROJECT_DIR%\src\preprocessing

|

||||

set CUDA_VISIBLE_DEVICES=%GPU%

|

||||

python calc_masks.py --data_path %DATA_PATH% --image_format png --max_size 2048

|

||||

IF %ERRORLEVEL% NEQ 0 (

|

||||

echo ERROR: Failed to run command

|

||||

exit /b 1

|

||||

)

|

||||

|

||||

REM 使用IQA分数过滤图像

|

||||

CALL activate_gaussian_splatting_hair.bat

|

||||

CALL "%MICROMAMBA_EXE%" activate -p %MAMBA_ROOT_PREFIX%\envs\gaussian_splatting_hair

|

||||

cd %PROJECT_DIR%\src\preprocessing

|

||||

set CUDA_VISIBLE_DEVICES=%GPU%

|

||||

python filter_extra_images.py --data_path %DATA_PATH% --max_imgs 128

|

||||

IF %ERRORLEVEL% NEQ 0 (

|

||||

echo ERROR: Failed to run command

|

||||

exit /b 1

|

||||

)

|

||||

|

||||

REM 调整图像大小

|

||||

CALL activate_gaussian_splatting_hair.bat

|

||||

CALL "%MICROMAMBA_EXE%" activate -p %MAMBA_ROOT_PREFIX%\envs\gaussian_splatting_hair

|

||||

cd %PROJECT_DIR%\src\preprocessing

|

||||

set CUDA_VISIBLE_DEVICES=%GPU%

|

||||

python resize_images.py --data_path %DATA_PATH%

|

||||

IF %ERRORLEVEL% NEQ 0 (

|

||||

echo ERROR: Failed to run command

|

||||

exit /b 1

|

||||

)

|

||||

|

||||

REM 计算方向图

|

||||

CALL activate_gaussian_splatting_hair.bat

|

||||

CALL "%MICROMAMBA_EXE%" activate -p %MAMBA_ROOT_PREFIX%\envs\gaussian_splatting_hair

|

||||

cd %PROJECT_DIR%\src\preprocessing

|

||||

set CUDA_VISIBLE_DEVICES=%GPU%

|

||||

python calc_orientation_maps.py --img_path %DATA_PATH%\images_2 --mask_path %DATA_PATH%\masks_2\hair --orient_dir %DATA_PATH%\orientations_2\angles --conf_dir %DATA_PATH%\orientations_2\vars --filtered_img_dir %DATA_PATH%\orientations_2\filtered_imgs --vis_img_dir %DATA_PATH%\orientations_2\vis_imgs

|

||||

IF %ERRORLEVEL% NEQ 0 (

|

||||

echo ERROR: Failed to run command

|

||||

exit /b 1

|

||||

)

|

||||

|

||||

REM 运行OpenPose

|

||||

CALL activate_openpose.bat

|

||||

CALL "%MICROMAMBA_EXE%" activate -p %MAMBA_ROOT_PREFIX%\envs\openpose

|

||||

cd %PROJECT_DIR%\ext\openpose

|

||||

mkdir %DATA_PATH%\openpose

|

||||

set CUDA_VISIBLE_DEVICES=%GPU%

|

||||

"%PROJECT_DIR%\ext\openpose\build\x64\Release\OpenPoseDemo.exe" --image_dir %DATA_PATH%\images_4 --scale_number 4 --scale_gap 0.25 --face --hand --display 0 --write_json %DATA_PATH%\openpose\json --write_images %DATA_PATH%\openpose\images --write_images_format jpg

|

||||

IF %ERRORLEVEL% NEQ 0 (

|

||||

echo ERROR: Failed to run command

|

||||

exit /b 1

|

||||

)

|

||||

|

||||

REM 运行Face-Alignment

|

||||

CALL activate_gaussian_splatting_hair.bat

|

||||

CALL "%MICROMAMBA_EXE%" activate -p %MAMBA_ROOT_PREFIX%\envs\gaussian_splatting_hair

|

||||

cd %PROJECT_DIR%\src\preprocessing

|

||||

set CUDA_VISIBLE_DEVICES=%GPU%

|

||||

python calc_face_alignment.py --data_path %DATA_PATH% --image_dir "images_4"

|

||||

IF %ERRORLEVEL% NEQ 0 (

|

||||

echo ERROR: Failed to run command

|

||||

exit /b 1

|

||||

)

|

||||

|

||||

REM 运行PIXIE

|

||||

CALL activate_pixie-env.bat

|

||||

CALL "%MICROMAMBA_EXE%" activate -p %MAMBA_ROOT_PREFIX%\envs\pixie-env

|

||||

cd %PROJECT_DIR%\ext\PIXIE

|

||||

set CUDA_VISIBLE_DEVICES=%GPU%

|

||||

python demos\demo_fit_face.py -i %DATA_PATH%\images_4 -s %DATA_PATH%\pixie --saveParam True --lightTex False --useTex False --rasterizer_type pytorch3d

|

||||

IF %ERRORLEVEL% NEQ 0 (

|

||||

echo ERROR: Failed to run command

|

||||

exit /b 1

|

||||

)

|

||||

|

||||

REM 合并所有PIXIE预测到单个文件

|

||||

CALL activate_gaussian_splatting_hair.bat

|

||||

CALL "%MICROMAMBA_EXE%" activate -p %MAMBA_ROOT_PREFIX%\envs\gaussian_splatting_hair

|

||||

cd %PROJECT_DIR%\src\preprocessing

|

||||

set CUDA_VISIBLE_DEVICES=%GPU%

|

||||

python merge_smplx_predictions.py --data_path %DATA_PATH%

|

||||

IF %ERRORLEVEL% NEQ 0 (

|

||||

echo ERROR: Failed to run command

|

||||

exit /b 1

|

||||

)

|

||||

|

||||

REM 将COLMAP相机转换为txt

|

||||

CALL activate_gaussian_splatting_hair.bat

|

||||

CALL "%MICROMAMBA_EXE%" activate -p %MAMBA_ROOT_PREFIX%\envs\gaussian_splatting_hair

|

||||

mkdir %DATA_PATH%\sparse_txt

|

||||

set CUDA_VISIBLE_DEVICES=%GPU%

|

||||

colmap model_converter --input_path %DATA_PATH%\sparse\0 --output_path %DATA_PATH%\sparse_txt --output_type TXT

|

||||

IF %ERRORLEVEL% NEQ 0 (

|

||||

echo ERROR: Failed to run command

|

||||

exit /b 1

|

||||

)

|

||||

|

||||

REM 将COLMAP相机转换为H3DS格式

|

||||

CALL activate_gaussian_splatting_hair.bat

|

||||

CALL "%MICROMAMBA_EXE%" activate -p %MAMBA_ROOT_PREFIX%\envs\gaussian_splatting_hair

|

||||

cd %PROJECT_DIR%\src\preprocessing

|

||||

set CUDA_VISIBLE_DEVICES=%GPU%

|

||||

python colmap_parsing.py --path_to_scene %DATA_PATH%

|

||||

IF %ERRORLEVEL% NEQ 0 (

|

||||

echo ERROR: Failed to run command

|

||||

exit /b 1

|

||||

)

|

||||

|

||||

REM 删除原始文件以节省磁盘空间

|

||||

rmdir /s /q %DATA_PATH%\input %DATA_PATH%\images %DATA_PATH%\masks %DATA_PATH%\iqa*

|

||||

IF %ERRORLEVEL% NEQ 0 (

|

||||

echo ERROR: Failed to run command

|

||||

exit /b 1

|

||||

)

|

||||

|

||||

REM 清理临时文件

|

||||

del /f /s /q %DATA_PATH%\*.tmp >nul 2>&1

|

||||

|

||||

REM ##################

|

||||

REM # 重建阶段 #

|

||||

@ -114,84 +176,144 @@ REM ##################

|

||||

set EXP_PATH_1=%DATA_PATH%\3d_gaussian_splatting\%EXP_NAME_1%

|

||||

|

||||

REM 运行3D Gaussian Splatting重建

|

||||

CALL activate_gaussian_splatting_hair.bat

|

||||

CALL "%MICROMAMBA_EXE%" activate -p %MAMBA_ROOT_PREFIX%\envs\gaussian_splatting_hair

|

||||

cd %PROJECT_DIR%\src

|

||||

set CUDA_VISIBLE_DEVICES=%GPU%

|

||||

python train_gaussians.py -s %DATA_PATH% -m "%EXP_PATH_1%" -r 1 --port "888%GPU%" --trainable_cameras --trainable_intrinsics --use_barf --lambda_dorient 0.1

|

||||

IF %ERRORLEVEL% NEQ 0 (

|

||||

echo ERROR: Failed to run command

|

||||

exit /b 1

|

||||

)

|

||||

|

||||

REM 运行FLAME网格拟合

|

||||

CALL activate_gaussian_splatting_hair.bat

|

||||

CALL "%MICROMAMBA_EXE%" activate -p %MAMBA_ROOT_PREFIX%\envs\gaussian_splatting_hair

|

||||

cd %PROJECT_DIR%\ext\NeuralHaircut\src\multiview_optimization

|

||||

|

||||

set CUDA_VISIBLE_DEVICES=%GPU%

|

||||

python fit.py --conf confs\train_person_1.conf --batch_size 1 --train_rotation True --fixed_images True --save_path %DATA_PATH%\flame_fitting\%EXP_NAME_1%\stage_1 --data_path %DATA_PATH% --fitted_camera_path %EXP_PATH_1%\cameras\30000_matrices.pkl

|

||||

IF %ERRORLEVEL% NEQ 0 (

|

||||

echo ERROR: Failed to run command

|

||||

exit /b 1

|

||||

)

|

||||

|

||||

python fit.py --conf confs\train_person_1.conf --batch_size 4 --train_rotation True --fixed_images True --save_path %DATA_PATH%\flame_fitting\%EXP_NAME_1%\stage_2 --checkpoint_path %DATA_PATH%\flame_fitting\%EXP_NAME_1%\stage_1\opt_params_final --data_path %DATA_PATH% --fitted_camera_path %EXP_PATH_1%\cameras\30000_matrices.pkl

|

||||

IF %ERRORLEVEL% NEQ 0 (

|

||||

echo ERROR: Failed to run command

|

||||

exit /b 1

|

||||

)

|

||||

|

||||

python fit.py --conf confs\train_person_1_.conf --batch_size 32 --train_rotation True --train_shape True --save_path %DATA_PATH%\flame_fitting\%EXP_NAME_1%\stage_3 --checkpoint_path %DATA_PATH%\flame_fitting\%EXP_NAME_1%\stage_2\opt_params_final --data_path %DATA_PATH% --fitted_camera_path %EXP_PATH_1%\cameras\30000_matrices.pkl

|

||||

IF %ERRORLEVEL% NEQ 0 (

|

||||

echo ERROR: Failed to run command

|

||||

exit /b 1

|

||||

)

|

||||

|

||||

REM 裁剪重建的场景

|

||||

CALL activate_gaussian_splatting_hair.bat

|

||||

CALL "%MICROMAMBA_EXE%" activate -p %MAMBA_ROOT_PREFIX%\envs\gaussian_splatting_hair

|

||||

cd %PROJECT_DIR%\src\preprocessing

|

||||

set CUDA_VISIBLE_DEVICES=%GPU%

|

||||

python scale_scene_into_sphere.py --path_to_data %DATA_PATH% -m "%DATA_PATH%\3d_gaussian_splatting\%EXP_NAME_1%" --iter 30000

|

||||

IF %ERRORLEVEL% NEQ 0 (

|

||||

echo ERROR: Failed to run command

|

||||

exit /b 1

|

||||

)

|

||||

|

||||

REM 移除与FLAME头部网格相交的头发高斯体

|

||||

CALL activate_gaussian_splatting_hair.bat

|

||||

CALL "%MICROMAMBA_EXE%" activate -p %MAMBA_ROOT_PREFIX%\envs\gaussian_splatting_hair

|

||||

cd %PROJECT_DIR%\src\preprocessing

|

||||

set CUDA_VISIBLE_DEVICES=%GPU%

|

||||

python filter_flame_intersections.py --flame_mesh_dir %DATA_PATH%\flame_fitting\%EXP_NAME_1% -m "%DATA_PATH%\3d_gaussian_splatting\%EXP_NAME_1%" --iter 30000 --project_dir %PROJECT_DIR%\ext\NeuralHaircut

|

||||

IF %ERRORLEVEL% NEQ 0 (

|

||||

echo ERROR: Failed to run command

|

||||

exit /b 1

|

||||

)

|

||||

|

||||

REM 为训练视图运行渲染

|

||||

CALL activate_gaussian_splatting_hair.bat

|

||||

CALL "%MICROMAMBA_EXE%" activate -p %MAMBA_ROOT_PREFIX%\envs\gaussian_splatting_hair

|

||||

cd %PROJECT_DIR%\src

|

||||

set CUDA_VISIBLE_DEVICES=%GPU%

|

||||

python render_gaussians.py -s %DATA_PATH% -m "%DATA_PATH%\3d_gaussian_splatting\%EXP_NAME_1%" --skip_test --scene_suffix "_cropped" --iteration 30000 --trainable_cameras --trainable_intrinsics --use_barf

|

||||

IF %ERRORLEVEL% NEQ 0 (

|

||||

echo ERROR: Failed to run command

|

||||

exit /b 1

|

||||

)

|

||||

|

||||

REM 获取FLAME网格头皮图

|

||||

CALL activate_gaussian_splatting_hair.bat

|

||||

CALL "%MICROMAMBA_EXE%" activate -p %MAMBA_ROOT_PREFIX%\envs\gaussian_splatting_hair

|

||||

cd %PROJECT_DIR%\src\preprocessing

|

||||

set CUDA_VISIBLE_DEVICES=%GPU%

|

||||

python extract_non_visible_head_scalp.py --project_dir %PROJECT_DIR%\ext\NeuralHaircut --data_dir %DATA_PATH% --flame_mesh_dir %DATA_PATH%\flame_fitting\%EXP_NAME_1% --cams_path %DATA_PATH%\3d_gaussian_splatting\%EXP_NAME_1%\cameras\30000_matrices.pkl -m "%DATA_PATH%\3d_gaussian_splatting\%EXP_NAME_1%"

|

||||

IF %ERRORLEVEL% NEQ 0 (

|

||||

echo ERROR: Failed to run command

|

||||

exit /b 1

|

||||

)

|

||||

|

||||

REM 运行潜在头发股线重建

|

||||

CALL activate_gaussian_splatting_hair.bat

|

||||

CALL "%MICROMAMBA_EXE%" activate -p %MAMBA_ROOT_PREFIX%\envs\gaussian_splatting_hair

|

||||

cd %PROJECT_DIR%\src

|

||||

set CUDA_VISIBLE_DEVICES=%GPU%

|

||||

python train_latent_strands.py -s %DATA_PATH% -m "%DATA_PATH%\3d_gaussian_splatting\%EXP_NAME_1%" -r 1 --model_path_hair "%DATA_PATH%\strands_reconstruction\%EXP_NAME_2%" --flame_mesh_dir "%DATA_PATH%\flame_fitting\%EXP_NAME_1%" --pointcloud_path_head "%EXP_PATH_1%\point_cloud_filtered\iteration_30000\raw_point_cloud.ply" --hair_conf_path "%PROJECT_DIR%\src\arguments\hair_strands_textured.yaml" --lambda_dmask 0.1 --lambda_dorient 0.1 --lambda_dsds 0.01 --load_synthetic_rgba --load_synthetic_geom --binarize_masks --iteration_data 30000 --trainable_cameras --trainable_intrinsics --use_barf --iterations 20000 --port "800%GPU%"

|

||||

IF %ERRORLEVEL% NEQ 0 (

|

||||

echo ERROR: Failed to run command

|

||||

exit /b 1

|

||||

)

|

||||

|

||||

REM 运行头发股线重建

|

||||

CALL activate_gaussian_splatting_hair.bat

|

||||

CALL "%MICROMAMBA_EXE%" activate -p %MAMBA_ROOT_PREFIX%\envs\gaussian_splatting_hair

|

||||

cd %PROJECT_DIR%\src

|

||||

set CUDA_VISIBLE_DEVICES=%GPU%

|

||||

python train_strands.py -s %DATA_PATH% -m "%DATA_PATH%\3d_gaussian_splatting\%EXP_NAME_1%" -r 1 --model_path_curves "%DATA_PATH%\curves_reconstruction\%EXP_NAME_3%" --flame_mesh_dir "%DATA_PATH%\flame_fitting\%EXP_NAME_1%" --pointcloud_path_head "%EXP_PATH_1%\point_cloud_filtered\iteration_30000\raw_point_cloud.ply" --start_checkpoint_hair "%DATA_PATH%\strands_reconstruction\%EXP_NAME_2%\checkpoints\20000.pth" --hair_conf_path "%PROJECT_DIR%\src\arguments\hair_strands_textured.yaml" --lambda_dmask 0.1 --lambda_dorient 0.1 --lambda_dsds 0.01 --load_synthetic_rgba --load_synthetic_geom --binarize_masks --iteration_data 30000 --position_lr_init 0.0000016 --position_lr_max_steps 10000 --trainable_cameras --trainable_intrinsics --use_barf --iterations 10000 --port "800%GPU%"

|

||||

IF %ERRORLEVEL% NEQ 0 (

|

||||

echo ERROR: Failed to run command

|

||||

exit /b 1

|

||||

)

|

||||

|

||||

rmdir /s /q "%DATA_PATH%\3d_gaussian_splatting\%EXP_NAME_1%\train_cropped"

|

||||

IF %ERRORLEVEL% NEQ 0 (

|

||||

echo ERROR: Failed to run command

|

||||

exit /b 1

|

||||

)

|

||||

|

||||

REM ##################

|

||||

REM # 可视化阶段 #

|

||||

REM ##################

|

||||

|

||||

REM 导出结果的股线为pkl和ply格式

|

||||

CALL activate_gaussian_splatting_hair.bat

|

||||

CALL "%MICROMAMBA_EXE%" activate -p %MAMBA_ROOT_PREFIX%\envs\gaussian_splatting_hair

|

||||

cd %PROJECT_DIR%\src\preprocessing

|

||||

set CUDA_VISIBLE_DEVICES=%GPU%

|

||||

python export_curves.py --data_dir %DATA_PATH% --model_name %EXP_NAME_3% --iter 10000 --flame_mesh_path "%DATA_PATH%\flame_fitting\%EXP_NAME_1%\stage_3\mesh_final.obj" --scalp_mesh_path "%DATA_PATH%\flame_fitting\%EXP_NAME_1%\scalp_data\scalp.obj" --hair_conf_path "%PROJECT_DIR%\src\arguments\hair_strands_textured.yaml"

|

||||

IF %ERRORLEVEL% NEQ 0 (

|

||||

echo ERROR: Failed to run command

|

||||

exit /b 1

|

||||

)

|

||||

|

||||

REM 渲染可视化

|

||||

CALL activate_gaussian_splatting_hair.bat

|

||||

CALL "%MICROMAMBA_EXE%" activate -p %MAMBA_ROOT_PREFIX%\envs\gaussian_splatting_hair

|

||||

cd %PROJECT_DIR%\src\postprocessing

|

||||

set CUDA_VISIBLE_DEVICES=%GPU%

|

||||

python render_video.py --blender_path "%BLENDER_DIR%" --input_path "%DATA_PATH%" --exp_name_1 "%EXP_NAME_1%" --exp_name_3 "%EXP_NAME_3%"

|

||||

IF %ERRORLEVEL% NEQ 0 (

|

||||

echo ERROR: Failed to run command

|

||||

exit /b 1

|

||||

)

|

||||

|

||||

REM 渲染股线

|

||||

CALL activate_gaussian_splatting_hair.bat

|

||||

CALL "%MICROMAMBA_EXE%" activate -p %MAMBA_ROOT_PREFIX%\envs\gaussian_splatting_hair

|

||||

cd %PROJECT_DIR%\src

|

||||

set CUDA_VISIBLE_DEVICES=%GPU%

|

||||

python render_strands.py -s %DATA_PATH% --data_dir "%DATA_PATH%" --data_device 'cpu' --skip_test -m "%DATA_PATH%\3d_gaussian_splatting\%EXP_NAME_1%" --iteration 30000 --flame_mesh_dir "%DATA_PATH%\flame_fitting\%EXP_NAME_1%" --model_hair_path "%DATA_PATH%\curves_reconstruction\%EXP_NAME_3%" --hair_conf_path "%PROJECT_DIR%\src\arguments\hair_strands_textured.yaml" --checkpoint_hair "%DATA_PATH%\strands_reconstruction\%EXP_NAME_2%\checkpoints\20000.pth" --checkpoint_curves "%DATA_PATH%\curves_reconstruction\%EXP_NAME_3%\checkpoints\10000.pth" --pointcloud_path_head "%EXP_PATH_1%\point_cloud\iteration_30000\raw_point_cloud.ply" --interpolate_cameras

|

||||

IF %ERRORLEVEL% NEQ 0 (

|

||||

echo ERROR: Failed to run command

|

||||

exit /b 1

|

||||

)

|

||||

|

||||

REM 制作视频

|

||||

CALL activate_gaussian_splatting_hair.bat

|

||||

CALL "%MICROMAMBA_EXE%" activate -p %MAMBA_ROOT_PREFIX%\envs\gaussian_splatting_hair

|

||||

cd %PROJECT_DIR%\src\postprocessing

|

||||

set CUDA_VISIBLE_DEVICES=%GPU%

|

||||

python concat_video.py --input_path "%DATA_PATH%" --exp_name_3 "%EXP_NAME_3%"

|

||||

IF %ERRORLEVEL% NEQ 0 (

|

||||

echo ERROR: Failed to run command

|

||||

exit /b 1

|

||||

)

|

||||

|

||||

Loading…

Reference in New Issue

Block a user